The Laboratoire de Perception Visuelle et Sociale has distinguished itself during the NeuroQAM 2020 conference, two students being awarded prizes for the quality of presentations by its members.

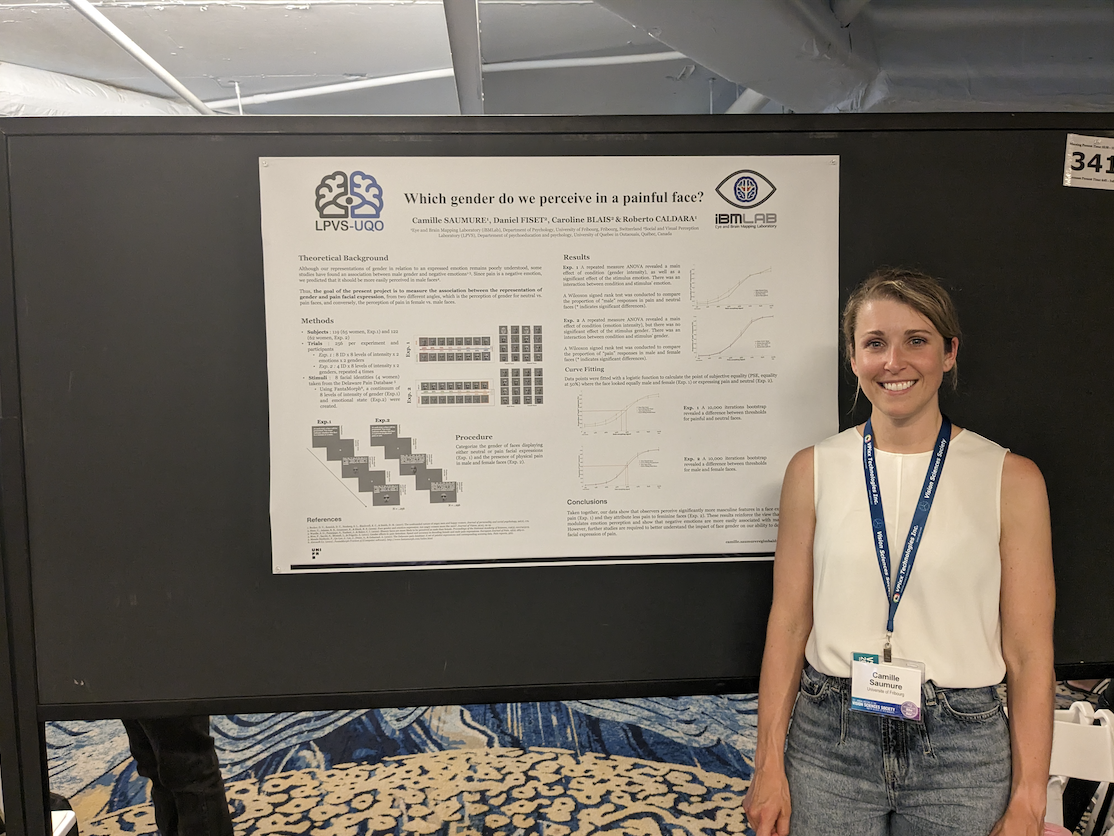

Marie-Pier Plouffe-Demers has received a prize for the best “datablitz” type presentation. Here is a summary of her presentation (translated from french):

Impact of Gender on Discrimination of Pain Intensity

Marie-Pier Plouffe-Demers Ph. D. student, Université du Québec à Montréal

SAUMURE, Camille ; FISET, Daniel ; CORMIER, Stéphanie ; KUNZ, Miriam ; BLAIS, Caroline

Studies have shown women have an advantage in discriminating the facial expression of pain, yet few have aimed to understand the underlying differences in visual strategies. This study used the Bubbles method to measure performance and visual strategies of 72 participants (37 men). 2 “bubblized” avatars (2 genders x 4 intensity levels) were presented in each of the 1512 trials. Participants had to determine which of the two expressed the highest level of pain. Precision was maintained constant at 75%, the number of bubbles required to reach this threshold being an indicator of task performance. Results show that men require a higher number of bubbles (M=56, SD=23.16) than women (M=44.5, SD=20.81), suggesting a higher performance for women [t(1,70)=2.22, p<0.029]. Even though both genders use similar regions in the face (i.e. eyes, eyebrows and nose), men use smaller regions than women [t(1,70)=2.43, p=0.017].

Marie-Claude Desjardins has also received a prize for for the best oral presentation. Here is a summary of her presentation (translated from french):

Link Between Visual Representations of the Facial Expression of Pain and Estimating Pain in Others.

Marie-Claude Desjardins Bachelor student, Université du Québec en Outaouais

BLAIS, Caroline ; LÉVESQUE-LACASSE, Alexandra ; CHARBONNEAU, Carine ; FISET, Daniel ; CORMIER, Stéphanie

The underestimation of pain felt by others is a well-documented phenomenon, yet we fail to sufficiently grasp the role visual perception plays in this bias. We verified if sensibility to variations in intensity in others’ pain, and the tendency to underestimate reported pain by others, was linked with variations in visual representations (VRs) of the pain facial expression. 73 participants completed a reverse correlation task to extract their VRs; their sensibility and estimation bias were measured by having them estimate pain levels of individuals seen through videos. Sensibility and estimation bias were shown to be linked to variations in VRs. Higher sensibility is linked to more intense VRs (χ2(1)=23.5, p<0.001) and a higher saliency of the eyebrows region (χ2(5)=47.2, p<0.001). Underestimation of pain is linked to less intense VRs (χ2(1)=11.7, p<0.001) and a higher saliency of the mouth region (χ2(5)=41.7,p<0.001).

The LPVS wishes to congratulate the two students for their prizes and the quality of their presentations. The team also congratulates all students and researchers involved in the projects.